Every digital camera has at its heart a solid-state device which, like film, captures the light coming in through the lens to form an image. This device is called a sensor. In this article we explain the different sensor types and sizes.

Consumers now have the option of a number of different cameras with differently-sized sensors, all at the same price point. Each type of sensor bears both advantages and disadvantages – with such a choice on offer it pays to understand what these are, particularly if you are considering investing in a new model. The following feature looks at these in more detail, and at sensors in general. But first, what exactly is a sensor?

What is a sensor?

A sensor is a solid-state device which captures the light required to form a digital image. While the process of manufacturing a sensor is well outside of the scope of this feature, what essentially happens is that wafers of silicon are used as the base for the integrated circuit, which are built up via a process known as photolithography. This is where patterns of the circuitry are repeatedly projected onto the (sensitized) wafer, before being treated so that only the pattern remains. Funnily enough, this bears many similarities to traditional photographic processes, such as those used in a darkroom when developing film and printing.

This process creates millions of tiny wells known as pixels, and in each pixel there will be a light sensitive element which can sense how many photons have arrived at that particular location. As the charge output from each location is proportional to the intensity of light falling onto it, it becomes possible to reproduce the scene as the photographer originally saw it – but a number of processes have to take place before this is all possible.

As sensor is an analogue device, this charge first needs to be converted into a signal, which is amplified before it is converted into a digital form. So, an image may eventually appear as a collection of different objects and colours, but at a more basic level each pixel is simply given a number so that it can be understood by a computer (if you zoom into any digital image far enough you will be able to see that each pixel is simply a single coloured square).

A well as being an analogue device, a sensor is also colourblind. For it to sense different colours a mosaic of coloured filters is placed over the sensor, with twice as many green filters as there are of each red and blue, to match the heightened sensitivity of the human visual system towards the colour green. This system means that each pixel only receives colour information for either red, green or blue – as such, the values for the other two colours has to be guessed by a process known as demosaicing. The alternative to this system the Foveon sensor, which uses layers of silicon to absorb different wavelengths, the result being that each location receives full colour information.

The more the merrier?

At one point it was necessary to develop sensors with more and more pixels, as the earliest types were not sufficient for the demands of printing. That barrier was soon broken but sensors continued to be developed with a greater number of pixels, and compacts that once had two or three megapixels were soon replaced by the next generation of four of five megapixel variants. This has now escalated up to the 20MP compact cameras on the market today. As helpful as this is to manufacturers from a marketing perspective, it did little to educate consumers as to how many were necessary – and more importantly, how much was too much.

More pixels can mean more detail, but the size of the sensor is crucial for this to hold true: this is essentially because smaller pixels are less efficient than larger ones. The main attributes which separate images from compact cameras (with small sensors) and those from DSLR, CSC or compact camera with a large sensor are dynamic range and noise, and the latter types of camera fare better with regards to each. As its pixels can be made larger, they can hold more light in relation to the noise created by the sensor through its operation, and a higher ratio in favour of the signal produces a cleaner image. Noise reduction technology, used in most cameras, aims to cover up any noise which has formed in the image, but this is usually only attainable by compromising its detail. This is standard on basic cameras and usually cannot be deactivated, unlike on some advanced cameras where the option to do so is provided (meaning you can take more care to process it out later yourself).

The increased capacity of larger pixels also means that they can contain more light before they are full – and a full pixel is essentially a blown highlight. When this happens on a densely populated sensor, it’s easy for the charge from one pixel to overflow to neighbouring sites, which is known as blooming. By contrast, a larger pixel can contain a greater range of tonal values before this happens, and certain varieties of sensor will be fitted with anti-blooming gates to drain off excess charge. The downside to this is that the gates themselves require space on the sensor, and so again compromise the size of each individual pixel.

Types of Sensor

CCD sensor

CCD sensor

Used for a number of years in video and stills cameras, CCDs long offered superior image quality to CMOS sensors, with better dynamic range and noise control.

To this day they are used in budget compacts, but their higher power consumption and more basic construction has meant that they have been largely replaced by CMOS alternatives. They are, however, still used in medium format backs where the benefits of CMOS technology are not as necessary.

CMOS sensor

CMOS sensor

Long seen as an inferior competitor to the CCD, CMOS sensors have progressed to match or better the CCD standard.

With more functionality built on-chip than CCDs, CMOS sensors are able to work more efficiently and require less power to do so, and are better suited to high-speed capture.

As such, they are required in cameras where burst shooting is key, such as Canon’s 1D series of DSLR cameras.

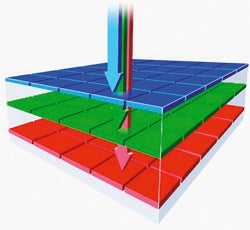

Foveon X3 sensor

Foveon X3 sensor

Foveon X3 is based on CMOS technology and used in Sigma’s compact cameras and DSLRs.

The Foveon X3 system does away with the Bayer filter array, and opts for three layers of silicon in its place.

Shorter wavelengths are absorbed nearer to the surface while longer ones travel further through.

As each photosite receives a value for each red, green and blue colour, no demosaicing is required.

LiveMOS sensor

LiveMOS sensor

LiveMOS technology has been used for the Four Thirds and Micro Four Thirds range of cameras.

LiveMOS is claimed to give the image quality of CCDs with the power consumption of CMOS sensors.

Sensor Sizes

A guide to the main sensor sizes used in today’s cameras

Full Frame – 36 x 24mm

The largest sensor size found in 35mm DSLRs. It shares its dimensions with a frame of 35mm negative film, and so applies no crop factor to lenses. It used to be the reserve of very high-end cameras, for professionals only, but the technology is getting more affordable. It also used to be true that full-frame sensors could only be found in very large cameras, but some manufacturers have found ways to shrink camera sizes while keeping a large sensor.

Cameras: Canon EOS 1DX Mark II, Canon EOS 5D Mark III, Canon EOS 5DS/R, Canon EOS 6D, Nikon D5, Nikon D810, Nikon D750, Nikon D610, Sony A7 II, Sony A7S II, Sony A7R II, Sony RX1R II

APS-H – 28.1 x 18.7mm

This type of sensor was featured in Canon’s older 1D series of cameras. These typically combine the slightly larger sensor with a modest pixel count for speed and high ISO performance, and apply a 1.3x crop factor to mounted lenses. The crop factor was useful for shooting sport and wildlife as it effectively lengthened the lens you were using, but the sensor size has since been discontinued.

Cameras:Canon 1D Mark IV, Canon 1D Mark III

APS-C – 23.6 x 15.8mm (varies)

The most common sensor size in consumer and semi-professional DSLRs, the APS-C sensor applies a crop factor between 1.5x to 1.7x to mounted lenses. It’s also found in Sony compact system cameras, and even some compact cameras.

Cameras: Nikon D500, Nikon D7200, Nikon D5500, Nikon D5300, Canon EOS 7D Mark II, Canon EOS 80D, Canon EOS 760D, Sony A6300, Fuji X100T, Fuji X70

Four Thirds – 17.3 x 13mm

As used in both Four Thirds DSLRs and Micro Four Thirds models, these are roughly a quarter of the size of a full-frame sensor. Their size results in a 2x crop factor, doubling the effective focal length of a mounted lens.

Cameras: Olympus PEN F, Olympus OM-D E-M1, Panasonic GX8, Panasonic GH4

One Inch – 9 x 12mm

These sensors have become very popular in recent years, especially in premium compact cameras. They offer a sensor which is much larger than a conventional compact camera, but still small enough to fit in pocket friendly devices.

Camera: Canon G5X, Canon G7X II, Canon G9X, Sony RX100 II, Panasonic TZ100

1/1.7in

Previously, this was usually the largest sensor size you’d find in compact cameras, they’re still bigger than sensors used in budget compacts. This size is relatively rare nowadays, as most manufacturers jump to a one-inch format sensor for their premium offerings.

Cameras:Canon PowerShot S95

1/2.3in

Among the smallest size of sensor used in today’s compacts. While cheaper to manufacture than larger varieties the smaller pixels aren’t quite as efficient, giving rise to noisy images and a reduced dynamic range.

Cameras: Canon SX720, Panasonic TZ80, Nikon A900

Please note: The last two measurements do not refer directly to the size of the sensor – rather, they are derived from the size of the video camera tubes which were used in televisions

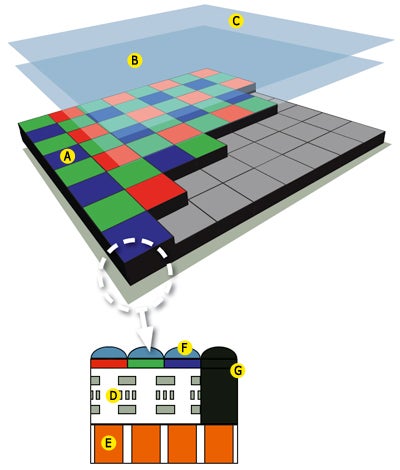

Anatomy of a sensor

A – Colour filter array

The vast majority of cameras use the Bayer GRGB colour filter array, which is a mosaic of filters used to determine colour. Each pixel only receives information for one colour – the process of demosaicing determines the other two.

B – Low-pass filter / Anti-aliasing filter

These are designed to limit the frequency of light passing through to the sensor, to prevent the effects of aliasing (such as moire patterning) in fine, repetitive details. What results is a slight blurring of the image, which compromises detail, but manufacturers attempt to rectify this by sharpening the image. Many modern sensor designs feature a filter-less design, or a double filter which cancels the effects of the anti-aliasing filter.

C – Infrared filter (hot mirror)

Camera sensors are sensitive to some infrared light. A hot mirror in between the lens and the low pass filter prevents this from reaching the sensor, and helps minimise any colour casts or other unwanted artefacts from forming.

D – Circuitry

CCD and CMOS sensors differ in terms of their construction. CCDs collect the charge at each photosite, and transfer it from the sensor through a light-shielded vertical array of pixels, before it is converted to a signal and amplified. CMOS sensors convert charge to voltage and amplify the signal at each pixel location, and so output voltage rather than charge. CMOS sensors may also typically incorporate extra transistors for other functionality, such as noise reduction.

E – Pixel

A pixel contains a light sensitive photodetector, which measures the amount of light (photons) falling onto it. This process releases electrons from the silicon, which forms the charge at each photosite.

F – Microlenses

Microlenses help funnel light into each pixel, thereby increasing the sensitivity of the sensor. These are particularly important as a proportion of most sensors’ surface area is taken up by necessary circuitry.

G – Black pixels

Not all pixels on a sensor are used for capturing an image. In fact, those around the peripheries are typically shielded from light, which allows the camera to see how much dark current builds up during an exposure when there is no illumination – this is one of the causes of noise in images. By measuring this, the camera is able to make a rough estimate as to how much has built up in the active pixels, and subtracts this value from them. The result is a cleaner image with less noise.