Lens resolution figures have long been stated in line-pairs per millimetre but in the digital arena it is common to use cycles per pixel: what is the difference and how are these two resolution metrics related to each other?

What do we mean when we talk about lens resolution? One clue

is given by the expression: “the picture was so sharp you could see every individual hair on his head”. That is really what resolution is all about… it is the ability to discern fine details that might otherwise blur into a general area with indistinct edges between what were actually separate things in real life (individual strands of hair in this case).

Technically, the model we use is similar to a picket fence that has white bars separated by black intervening spaces. When standing very close to the fence it is easy to see that there are distinct white bars and black spaces but as you move further and further away the detail starts to disappear and there comes a point at which the human eye (or a camera lens) can no longer resolve the individual bars and spaces. That is the limiting resolution of the system.

You can easily imagine holding a little 1mm square mask in front of your eye and seeing how many white and black bars you can count in the final stage before the white and black regions fuse into a mass of grey. If there were 10 bars-and-spaces then we would say your eye could see 10 line-pairs per millimetre. (Actually the resolution should be measured on the retina in the back of your eye but bear with me on this as it is the principle that matters more that the exact technical details.)

If your eye were a full-frame digital sensor, which measures 36x24mm, then 10 line-pairs per millimetre would allow you to photograph a distant fence that was 360 bars-and-gaps long. If you tried to squeeze a longer length of fence into the picture, by standing further back, the bars-and-gaps would blur together: you would get more into the picture but you would lose detail in the process.

Of course, in order to see 10 line-pairs per millimetre your eye needs to be able to detect 20 “things” per millimetre – each thing being either a white bar or a black space. So in order to record your 360 bars-and-gaps you would need to have at least 720 pixels across the picture – half of them recording white bars and the other half recording the black spaces in between the bars.

The same works in reverse. If you know you have 3000 pixels across your sensor then you know you can record a maximum of 1500 line-pairs. And given that the sensor measures 36mm across, you can divide 1500 by 36 to calculate that the maximum resolution of the sensor must be 42 line-pairs per millimetre. (In fact there is a complication, known as the Nyquist Criterion, that halves this figure but we will overlook that here.)

The big question is how well any given lens is matched to the sensor – and therefore how closely the lens allows the sensor to approach its maximum resolution. One way to determine this would be to calculate a line-pairs per millimetre figure and then express this as a fraction (or percentage) of the sensor’s theoretical figure.

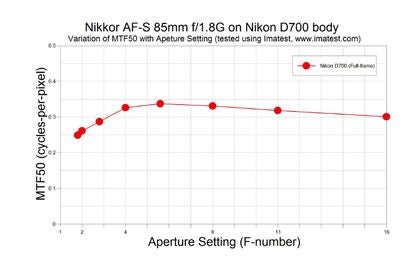

It would be more direct, however, to calculate how close the lens gets to recording one “thing” on each pixel. This is the same as recording half-a-pair on each pixel. And if we call a complete pair a “cycle” then the theoretical maximum resolution is half-a-cycle-per-pixel. And that is why the resolution graphs that appear in my lens tests for What Digital Camera have a vertical axis that measures cycles-per-pixel. The highest theoretical figure possible is 0.5 cycles-per-pixel – although anything above 0.25 cycles-per-pixel is fine according to the Nyquist Criterion. As was pointed out in my last ‘blog entry: Olympus has become the first lens manufacturer (amongst the lenses I have tested) to hit the magical 0.5 cycles-per-pixel performance figure.

To go in reverse, and change from cycles-per-pixel to line-pairs per millimetre, you need to know the resolution figure, the sensor size and the sensor’s pixel count. The graph shown above, for example, indicates a maximum resolution of about 0.34 cycles-per-pixel. That means it will require about three pixels to record a full cycle, which is the same thing as a line-pair. The camera used in this case was a Nikon D700, which has 4256 pixels across the width of its sensor, so that means it can record one-third that number of line-pairs, or 0.34 x 4256 to be exact, giving 1447 line-pairs. Given that the D700 is a full-frame camera with a sensor that is 36mm wide, the 1447 line-pairs in total scale down to 40 line-pairs per millimetre (1447 divided by 36).

Some people may think that’s a rather modest performance but it is already above the Nyquist Criterion threshold, so the lens is already good match for the D700 and little would be gained by designing it with an even higher level of resolution.