What Digital Camera takes a closer look at colour: what is colour, how we see it and how we manage it within photography.

What is colour?

Colour is a percpetion which is created when our visual system responds to a small section of the electromagnetic spectrum. This section is between around 400nm and 700nm, and is classed as the visible spectrum. Outside of this lie other forms of radiation, such as ultraviolet and infrared; we can’t see these, but if a camera responds to the them they can also be used for imaging.

The visible part provides our eyes with the stimulation we need to see all colours, from purples and blues at the shorter end of the range, through to greens and yellows, and finally to red at the other end. The colours we evetually see depend on three main things: the light source, the subject we are looking at and our visual system.

Light Sources

Light sources can either be natural such as daylight, or artificial such as tungsten or fluorescent.

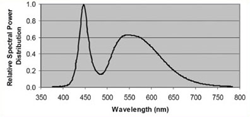

The lighting source plays a vital part in the colour which is perceived, as an object can only react to the light under which it is viewed. To give an example, daylight is considered to be neutral as it is composed of a roughly even spread of wavelengths over the visible spectrum (known as its spectral power distribution). Tungsten, on the other hand, appears much warmer to us than daylight, which suggests that there is some sort of bias towards the yellows and red than blue.

The lighting source plays a vital part in the colour which is perceived, as an object can only react to the light under which it is viewed. To give an example, daylight is considered to be neutral as it is composed of a roughly even spread of wavelengths over the visible spectrum (known as its spectral power distribution). Tungsten, on the other hand, appears much warmer to us than daylight, which suggests that there is some sort of bias towards the yellows and red than blue.

Objects

Aside from illuminated object such as lamps, which are seen by the light they emit, we see objects because of the light they reflect. The light an object reflects and absorbs plays the second part in determining colour. Under daylight, for example, a red apple will appear red, as it will reflect red light and absorb blue and green. Yet, when another light source is used the same object can appear very differently.

A common example is with vehicles viewed under sodium-vapour streelights. Unlike daylight or tungsten lights, which have a continuous spread of power across the visible spectrum, these lights have a very narrow peak in their spectral power distributions. As the light they emit only covers a small section of the visible spectrum, certain coloured vehicles may not reflect this – and if they don’t reflect any light they simply appear black.

Human Visual System

Almost all the colours we see can be created by mixing different amount of the three primaries: red, green and blue. In the eye, one type of photoreceptor (rods) deals with light and another (cones) with colour, and each is sensitive to a part of the visible spectrum. The cones come in three types, each with a peak sensitivity in blue, green and red regions (short, medium, and long wavelengths respectively), although they overlap each other and are not equally sensitive.

Although there have been different theories as to how we ‘see’ and process colour information, it is now believed that the information from both the light-sensitive rods and colour-sensitive cones is translated into three channels: one for lightness, and two for colour.

Although there have been different theories as to how we ‘see’ and process colour information, it is now believed that the information from both the light-sensitive rods and colour-sensitive cones is translated into three channels: one for lightness, and two for colour.

One colour channel deals with reds and greens, while the other with blues and yellows. Certain colour spaces, such as CIELAB (LAB) systems, were created on this principle; the L* channel deals with lightness, while the a* and b* channels deal with red/green and yellow/blue respectively.

This is down to our inability to see certain colours together (ie. a red-green or a yellowy-blue).

Managing colour

Managing colour

In digital photography, we rely on data based on the human visual system and its response to different colours in order to define colour numerically. This helps us to manage colours across different devices, which is vital as there are many reasons why colours on one device will reproduce differently on another, such as the device’s characteristics, the age and condition of the equipment, and the gamut in which colours may be mapped. Knowing how to correct for these differences is key to ensuring accurate colour reproduction through an imaging chain.

Colour management is an exhaustive subject, although understanding just a few principles can quickly bring benefits.

The first is monitor calibration, the idea of which being that the output of a display is analysed before any necessary changes are made so that colour is reproduced faithfully. The process itself doesn’t take very long, although you do need the right hardware which is capable of taking accurate readings from your monitor. Profiling is related to calibration, although rather than change something about a device profiling aims to describe its characteristics. If every device within an imaging chain has a profile attached to it, the characteristcis of each may be accounted for.

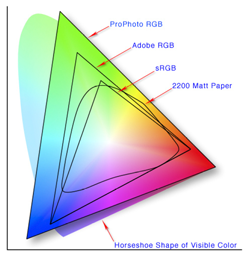

The other is the issue of colour space, which is a mathematical model in which colours are plotted. Adobe RGB has a slightly broader gamut than sRGB, and so it is often recommended as the default capture space, although if your images are only ever destined to end up on the web you’re better off capturing in sRGB. This is because the smaller gamut concentrates colour and makes them appear more vibrant, whereas the wider AdobeRGB gamut is a better starting point if your images are to be printed. Shooting Raw images means that you’re not tied to any one colour space upon capture, which gives you the most flexibility when it comes to processing and outputing your images.